|

7/27/2023 0 Comments Silverstack screen grab They put each lens through the process, and note the serial number, to track exactly what characteristics it's giving the camera, so that it works correctly. Normally, what they do is take half an hour to forty-five minutes to calibrate a lens. Not only do you want to track the shading and distortion characteristics, but you need to apply them in real time, through the Unreal Engine, for example, in virtual production, otherwise you can't line up your objects. Their feet won’t appear to be touching the ground because we haven't accounted for the distortion. They'll be floating maybe an inch off the ground. Now, if I add a background object and my background is flat but my foreground is bent, it's going to look odd, right? Because any poles or anything I have in the foreground is going to look like it's bending, because they are real objects, but everything else in the background is not bending, not real, so it doesn't look like it matches the lens.Īlso, let's say you created a cartoon character standing next to you, or jumping around you, it won't be standing on the ground. The further you are away from the center of the lens, your corners will bend even more, as will your walls. Actually, that floor appears to be lower at the apex, at the bottom than it is in real life, because of the curvature of the lens. Now, if you're standing on a real floor in real life, if I put a wide angle 18mm lens on the camera, that floor bends. You have AR objects or background objects, or you're standing on a floor. This is a very common thing with virtual production. For virtual production, you need this data in real-time because what ends up happening is if you have a wide angle lens and you're doing the new Bugs Bunny basketball movie, Space Jam, you have animated characters standing next to live actors or basketball stars. Other than that, it’s not really an on-set thing unless you're doing virtual production. On set, the main thing is to make sure you're capturing the data. We have the Live Grade and Silverstack applications, which is Pomfort software for DITs that lets you see the data and test it and make sure it's working correctly, and to extract it to use for other purposes. There is on-set software that lets you see the shading and distortion characteristics. This way, they, Fujinon, don't have to have a full infrastructure. I’m hearing a lot of good things about those lenses. And the Premista Zooms from Fujinon also support the ZEISS XD technology.Īh, nice. So we will find this functionality in the ZEISS Supreme Primes and the Radiance, obviously, but what others? We are using their protocol and adding some optional information, manufacturer specific information for example.

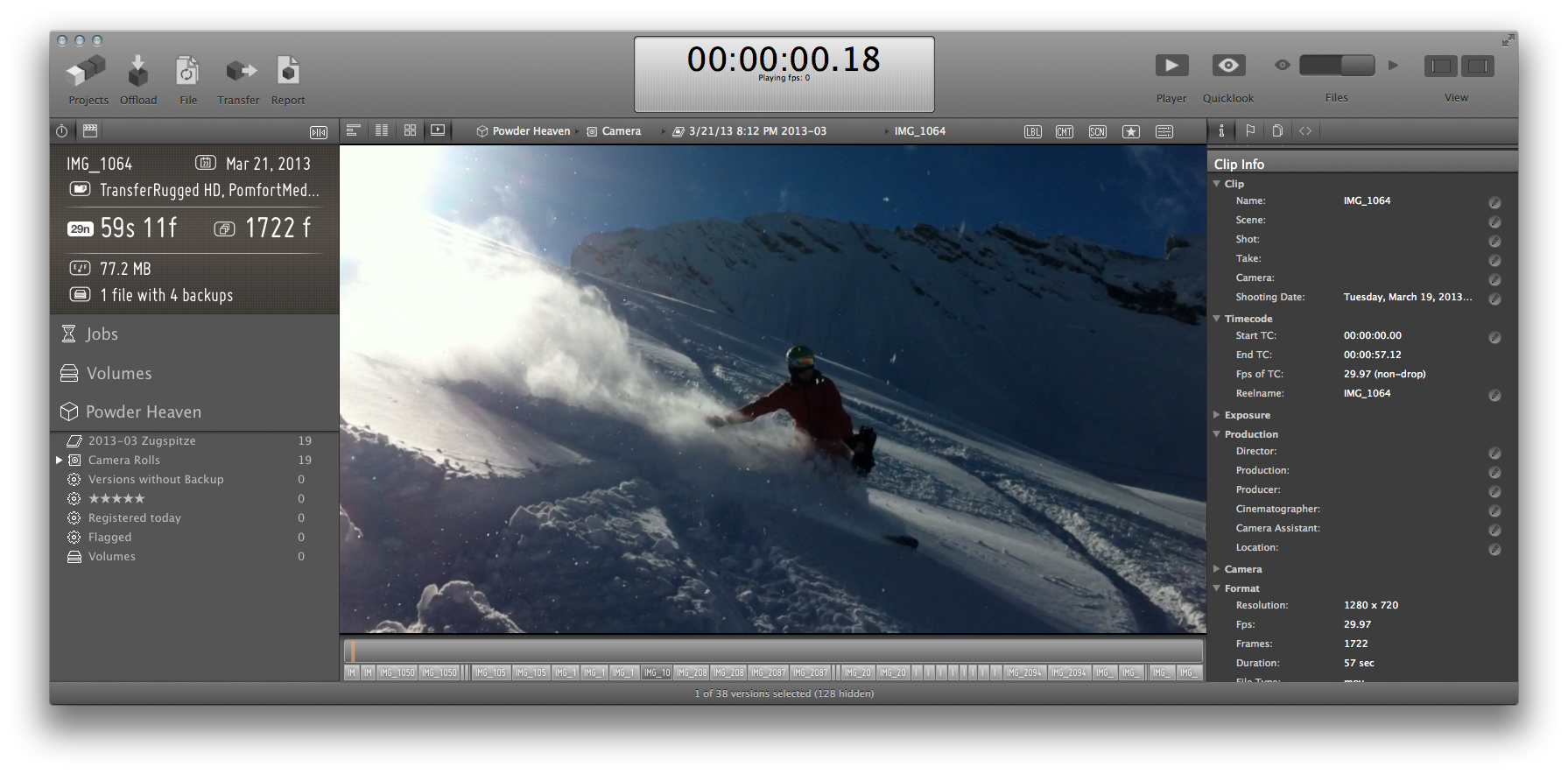

Is ZEISS extended data the same language as Cooke/i? And ZEISS provides free plugins for NUKE and for After Effects for this purpose. That's how VFX works in this regard, so the workflow does not change. The two should be seamless and look like they came from the same camera and lens combination. That's how you get a better composite and how everything looks more natural, like it was actually shot in camera, so that you don't have, like, an A cam shot of your closeup looking vastly different from your VFX shot, that followed right afterwards. Once you load those flatter-looking elements and layer everything together, you then re-apply your shading and distortion characteristics over the whole final composite image. Then, you would add your composite elements because you're going to drop things into the computer more squared off, not with the bend of a lens. What you would do first is un-shade and un-distort everything and flatten everything out. Let's say you're doing a wide shot and you have to composite it with multiple plates. The price quoted is that of a yearly subscription.Let’s discuss how this all works in real world applications. For these cameras Silverstack includes extensive metadata and media management features, professional playback controls and further tools for quality check. Silverstack offers advanced support for handling movie data coming from ARRI Alexa, RED, Canon C300 / C500, Sony F5 / F55, Digital Bolex, Blackmagic Cinema Camera, Indiecam, Ikonoskop, Canon DSLR, Nikon DSLR and GoPro cameras as well as from AJA and Atomos field recorders.

Furthermore, Silverstack includes a First Look transcoding functionality, a comprehensive clip library and customizable report capabilities which allows to document and to summarize all essential data management processes for the film- and postproduction team. The powerful software tool enables high-speed and checksum-verified copy and backup processes to multiple destinations for all kind of camera and file formats.

Silverstack covers all essential media management activities on set starting the very first moment data is loaded off the camera.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed